Search our website

Testing precise timed replay from packet captures

Following on from the published article in eForex about the Beeks mdPlay service, we take a deeper dive into how this technology works, and why customers find it so valuable.

Firstly, it’s important to understand that high-volume market data from an exchange is usually distributed using the UDP multicast protocol. Christoph Lameter did a good overview in 2016 of why multicast became the technology of choice here.

But why replay market data?

Algo performance validation, backtesting, stress testing, and regulations

Another more recent need for accurate market data replay comes from the MIFID II regulations which have now been in force for over six years. This mandates testing of algorithmic systems (and associated controls and procedures) at double historic peak volumes (as identified in the specific regulations on stress testing and on trading venue capacity). As a result, we had a clear requirement that mdPlay should support easily scaling up (or scaling down) market data replays, as well as allowing multiple different packet captures to be replayed concurrently (so that peaks from multiple venues, or recorded with different packet capture servers, could be combined in pre-production). This is good practice even for venues outside of the scope of MIFID II. It is especially topical given the challenges that firms face in scaling up their infrastructure to accommodate the OPRA bandwidth increase caused by the February 2024 increase from 48-lines to 96-lines.

The downsides of feed handler market data replay

It does not replicate microbursts and network behaviour

mdPlay takes a different approach. Rather than playing back from logs, it plays back from independent packet captures of the data, and rather than playing directly into an application, it transmits the traffic onto the network. This ensures a higher fidelity playback than the feed handler replay approach — you can understand the interplay of your physical network infrastructure and your application.

The other key aim that we had for mdPlay was for the replay to have nanosecond accuracy. Financial market data is characterised by microbursts . A financial firm needs to accurately reproduce microbursts because they need to be able to make sure that their algorithms (whether they’re operating software running on a standard CPU, or they’re embedded in an FPGA) can handle these bursts, as well as being able to reliably recreate any issues. For these types of firms, mdPlay needs to be able to offer replays that will quickly pump out a very high bandwidth pulse of data without it being spread over a wider range of time. It must also make it look just like the original pulse that may have caused the original issue.

When we developed mdPlay, Beeks looked at three different techniques to ensure we picked the technology that could provide the highest fidelity replay. We assessed fidelity by comparing the replay results with the original market data packet captures.

Replay Test Methodology

To conduct these tests, we initially captured a busy hour of market data traffic from one of the world’s largest exchanges. The particular exchange was chosen because we knew it to be representative of the type of data our clients are likely to wish to replay. The capture technique employed achieved this with 10 nanosecond tick precision.

We then transmitted this data using each of the three different tools, and re-captured it (for this we used a specific type of switch known to introduce very little jitter). We also synchronised the clocks on the capture side and the re-transmit side as accurately as possible, using either PTP or (where feasible) a PPS signal. Having performed these tasks, we were then able to compare the original capture with the new one.

For each packet in each capture, we calculated the timestamp delta from the first packet in the capture (the “absolute” timestamp), and the timestamp delta to the previous packet in the capture (“the frame-to-frame delta”). We then compared these figures to see how close the original capture was to the new one. Where a difference in the absolute timestamp delta was revealed, this demonstrated drift in the replay mechanism. Additionally, we interpreted a difference in the frame-to-frame delta timestamp to indicate the amount of jitter in the replay. Here’s what we found….

Replay Test Technologies

We looked at three technologies for our testing:

- Tcpreplay a GPLv3-licenced Unix tool (despite the name, this can also replay UDP). Does not require dedicated replay hardware.

- Two commercially available replay libraries, provided by smart NIC manufacturers. Both these offerings require that manufacturer’s Smart NICs.

Replay Test Results

The clear winner…

The best results that we found were with one of the commercially available replay libraries. With the ‘premium’ platform the degree of accuracy achieved significantly surpassed that of the other two offerings. It was able to achieve +/-20 nanosecond replay accuracy, with all packets retransmitted.

This particular replay mechanism operated dedicated replay hardware and even when challenged with transmitting a big buffer of packets simultaneously, it was able to accurately replay the packets using its on-board clock. In doing so, the premium technology eliminated the possible situation where the replay mechanism receives a packet and is waiting for the operating system (OS) to get to a certain time before it can receive the next packet on the card. This is because the packet’s already with the card and the card does the retransmission in hardware.

Synchronised market data replay

Also, this was the only platform able to synchronise replays on different ports accurately. In fact, the other platforms didn’t offer any features in their standard tool kits to do this, it had to be done by hand. For clients operating algo trading systems in ultra-time sensitive environments, the ability to accurately synchronise multiple replays on different ports can be very useful as it ensures that packets are put on to the wire in the correct order.

Runner-Up Performance

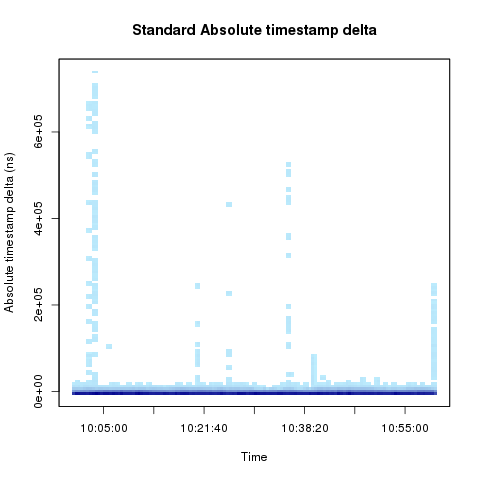

Comparatively the second commercially available replay mechanism we tested (we’ll refer to it as the ‘standard’ offering), whilst able to accurately retransmit the vast majority of packets did experience occasional jitter and delay of up to 700 microseconds. However, this was limited to a very small fraction of the total number of packets being transmitted. 99.355% of all packets were replayed within 1100 nanoseconds compared to original capture file.

Like the other commercial replay technology, this platform also required specific replay hardware, but with this particular tool the packets were waiting in software for the right time to be released. (However, it should be said that once it reached that time the card was able to retransmit the packets very quickly as optimisation techniques avoided the need for the packets to travel though many of the OS layers.)

Tcpreplay accuracy

An open source tool — best price/performance trade-off for retail traders, not suitable for trading firms

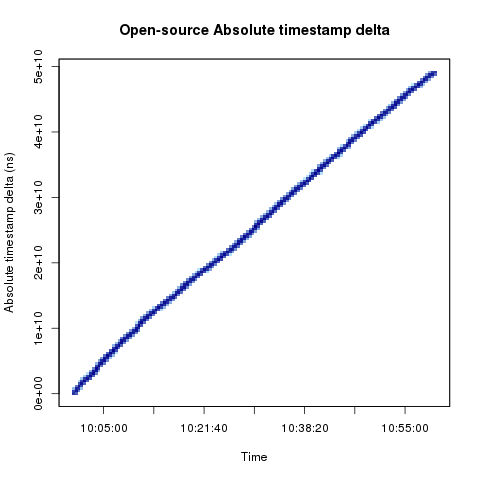

The final replay mechanism tested was an open source tool that does not require dedicated replay hardware. Because Tcpreplay retransmitted files through a regular interface (versus employing optimisation techniques to circumnavigate certain OS layers) the retransmission accuracy suffered.

Furthermore, whilst the other platforms were able to handle nanosecond precision capture, Tcpreplay was limited to being able to access the previously captured data with microsecond precision.

An interesting feature of the open-source platform (clearly visible in the chart above) was the drift it introduced over time. The errors in each packet accumulated so there ended up being a constant drift. In one of the tests we ran, the retransmission of an hour’s traffic took an hour and a minute to replay.

In summary, there was a decline in accuracy (whilst mild) experienced between the first and second replay mechanism we tested and then a more substantial differential evidenced between these commercial offerings and the open source tool. However, it’s also important to consider the cost / accuracy trade-off these platforms present. For certain firms for whom nanosecond accuracy isn’t essential this balance may easily swing in the direction of the open source mechanism. Certainly for individual retail traders, it may well be that Tcpreplay (or even bespoke software replay) is likely to be sufficient.

Wrapping a service around the best technology

100Gbps capture and analytics

We don’t have time in this article to go into any more depth around the other features that we built into the market data replay (mdPlay) product to make it easy to use for our financial clients. Briefly speaking, these include scenario management, an easily manageable library of captures, and integration with our analytics technology to both record the packet captures at high speed (we are 100Gbps packet capture capable) and provide visibility of the quality (gaps, latency) of the incoming feed.

I welcome any questions you have on these findings and would be more than happy to discuss with you which replay mechanism may prove most appropriate in meeting your firm’s requirements.